ORDS provides insight to its database pool cache through the ORDS Instance API. This is extremely useful for monitoring the database connection usage on a particular ORDS instance. Such information could reveal how close ORDS gets to using up its available connections as a result of the requests coming in and their correspond SQL statements. That would indicate if more resources or optimisations are required.

The ORDS Instance API is intended for System Administrator use in the deployed infrastructure. Users with this role are extremely powerful. Therefore the credentials for such users are extremely sensitive and how they are used should be carefully considered.

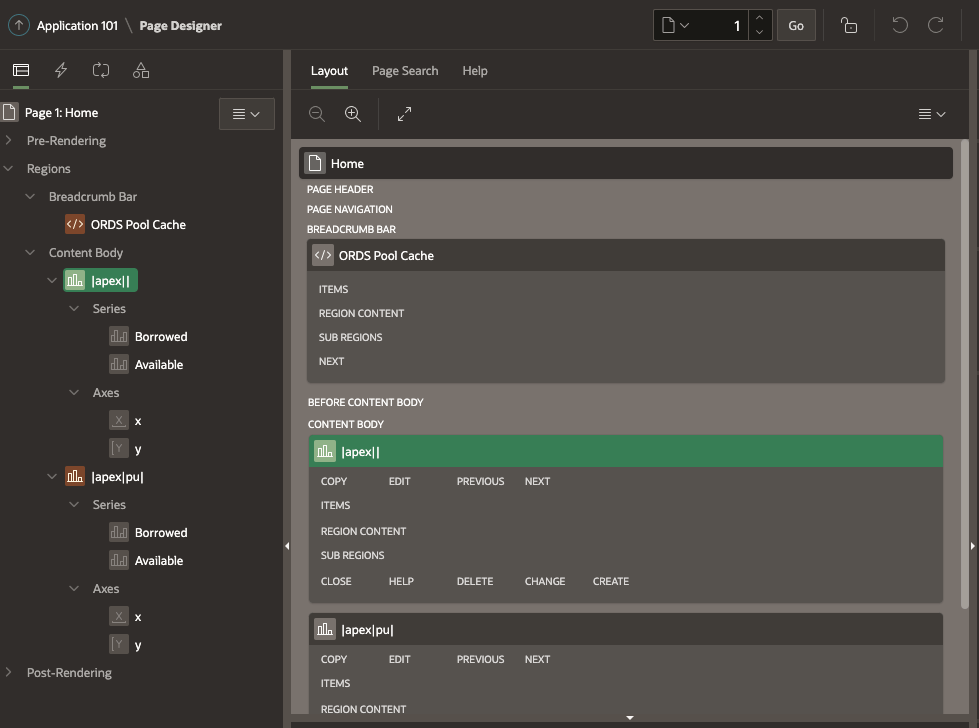

This article introduces an example application which shows how APEX could be used to retrieve the Database Pool Cache information with ORDS System Administrator credentials secured. The number of available and borrowed connections for both the APEX_PUBLIC_USER and ORDS_PUBLIC_USER database accounts are retrieved every 10 minutes and a chart of the data displayed.

Configure ORDS Instance API

Stating the obvious here, but to get the database pool cache information from ORDS, the Instance API has to be enabled first. The steps for doing so are already covered in the product documentation but I’m going to call it out as prerequisite for this exercise. Also, just to repeat the product documentation, using the credentials file ( also known as: File Based User Repository ) is not intended for production use.

Keep secrets secret

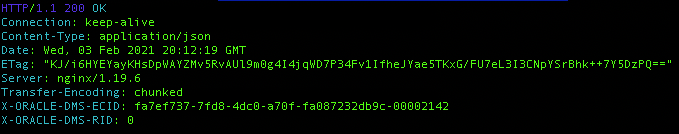

In my case I defined a user called sysadmin with the System Administrator role and whose credentials will be provided on every request. To keep the credentials separate from the APEX application I’ll define them in the workspace and state that these credentials can only be used for Instance API requests. That means the same credentials could not be used for other ORDS services such as the Oracle Home information and DBCA operations available through the Database API environment category of services.

About that URL

At this point you’ve probably noticed the host.docker.internal portion of the URL. I’m using that because I’m running the database in a docker container hosted on the same machine that ORDS is running on. If I was to refer to localhost, that would be the docker container. The host.docker.internal identifies the machine hosting the container. Docker provides a number of other networking options here but this is the simplest for my setup.

In this case, ORDS standalone is running on port 8080 on that machine. We’ll use this URL again when defining a REST Data Source in our APEX application.

>java -jar ords.war standalone 2021-10-11T09:23:33.448Z INFO HTTP and HTTP/2 cleartext listening on host: localhost port: 8080 2021-10-11T09:23:33.466Z INFO Disabling document root because the specified folder does not exist: /scratch/ords-21.3.0.266.1100/config/ords/standalone/doc_root 2021-10-11T09:23:35.052Z INFO Configuration properties for: |apex|pu| database.api.enabled=true db.connectionType=basic db.hostname=localhost db.port=1521 db.servicename=orclpdb1 feature.sdw=true instance.api.enabled=true restEnabledSql.active=true db.password=****** db.username=ORDS_PUBLIC_USER 2021-10-11T09:23:35.053Z WARNING *** jdbc.MaxLimit in configuration |apex|pu| is using a value of 10, this setting may not be sized adequately for a production environment *** 2021-10-11T09:23:35.054Z WARNING *** jdbc.InitialLimit in configuration |apex|pu| is using a value of 3, this setting may not be sized adequately for a production environment *** 2021-10-11T09:23:36.053Z INFO Configuration properties for: |apex|| database.api.enabled=true db.connectionType=basic db.hostname=localhost db.port=1521 db.servicename=orclpdb1 misc.compress= misc.defaultPage=apex security.disableDefaultExclusionList=false security.maxEntries=2000 security.requestValidationFunction=wwv_flow_epg_include_modules.authorize security.validationFunctionType=plsql db.password=****** db.username=APEX_PUBLIC_USER resource.templates.enabled=true 2021-10-11T09:23:36.054Z WARNING *** jdbc.MaxLimit in configuration |apex|| is using a value of 10, this setting may not be sized adequately for a production environment *** 2021-10-11T09:23:36.054Z WARNING *** jdbc.InitialLimit in configuration |apex|| is using a value of 3, this setting may not be sized adequately for a production environment *** 2021-10-11T09:23:37.664Z INFO Oracle REST Data Services initialized Oracle REST Data Services version : 21.3.0.r2661100 Oracle REST Data Services server info: jetty/9.4.43.v20210629

Permit the request

At this stage it should be clear that we have a database running in a docker container and ORDS standalone running on the same machine which is hosting that container. ORDS has two pools configured to talk to the database at localhost:1521/orclpdb1.

In my case, I started an Oracle Enterprise Edition 19c database using the image from https://container-registry.oracle.com/ and installed APEX 21.1 to it.

If we were to go ahead and define the APEX REST Data Source at this stage we might encounter this error once we try to test it.

ORA-24247: network access denied by access control list (ACL)

Put simply, the APEX application does not have permission to make a request from the database to the host on port 8080. A network access control list must be defined. In my case I am running APEX 21.1 and therefore will grant access to the APEX_210100 database user. If you have a different version of APEX, you will have a different user in this scenario. To define the ACL and assign it run this script in the database…

DECLARE

l_principal VARCHAR2(20) := 'APEX_210100';

BEGIN

DBMS_NETWORK_ACL_ADMIN.create_acl (

acl => 'hosted_ords_acl.xml',

description => 'An ACL for the hosted ORDS instance',

principal => l_principal,

is_grant => TRUE,

privilege => 'connect',

start_date => SYSTIMESTAMP,

end_date => NULL);

DBMS_NETWORK_ACL_ADMIN.assign_acl (

acl => 'hosted_ords_acl.xml',

host => 'host.docker.internal',

lower_port => 8080,

upper_port => 8080);

COMMIT;

END;

APEX Application

For this example I created a simple database application with just a home page which requires authentication. This means that for someone to access the charts they must authenticate with APEX. At no point will they need the sysadmin credentials. The home page will display two charts showing data from a synchronisation table which is appended to every 10 minutes with the connection pool state at that point in time.

REST Data Source

In that application Shared Components define a REST Data Source which will automatically make a call every 10 minutes to add records to a table.

The REST Data Source type should be Simple HTTP because there’s no need for pagination or to perform any additional operations such as POST, PUT, or DELETE. Note that the Base URL is the same value as specified for the Web Credential earlier and that ORDS System Administrator web credential is selected in the Authentication section.

Synchronisation must also be defined so that the REST call is made on a regular basis to append to a DATABASE_POOL_CACHE table. Going through the synchronisation setup wizard will result in this table being created.

The table name DATABASE_POOL_CACHE comes from the /database-pools-cache/ portion of the endpoint URL. You can choose to name it whatever suits you but you will be referring to this table later when constructing the charts.

CREATE TABLE "DATABASE_POOL_CACHE" ( "ID" VARCHAR2(4000), "CREATED" TIMESTAMP (6) WITH TIME ZONE, "VALIDITY" VARCHAR2(4000), "BORROWEDCONNECTIONSCOUNT" NUMBER, "AVAILABLECONNECTIONSCOUNT" NUMBER, "APEX$SYNC_STEP_STATIC_ID" VARCHAR2(255), "APEX$ROW_SYNC_TIMESTAMP" TIMESTAMP (6) WITH TIME ZONE ) /

Every ten minutes the APEX REST Data Source synchronisation job will run. It will identify any synchronisation activity that is required and start making the necessary web service requests.

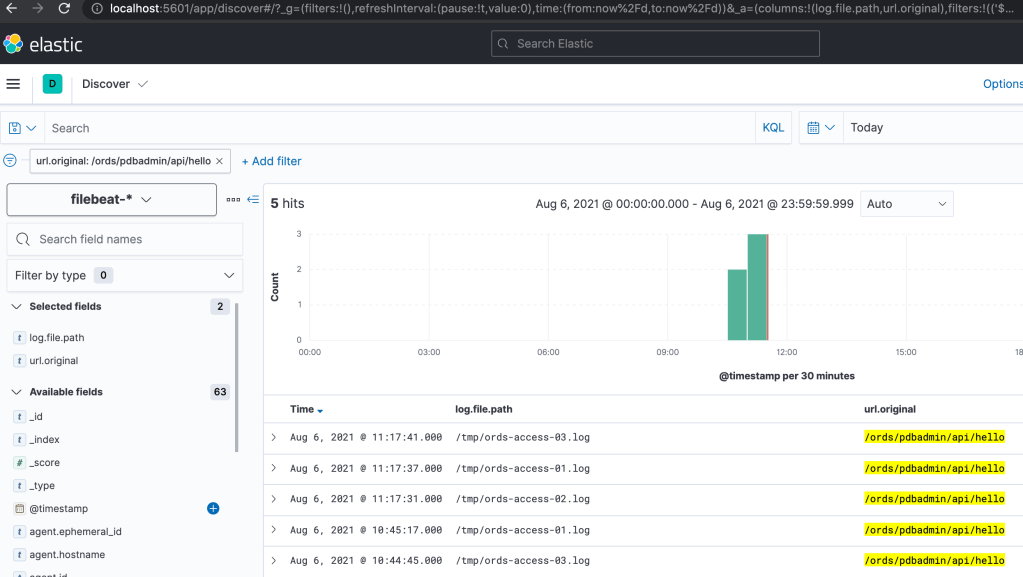

The ID column contains the pool identifier. This column, along with the BORROWEDCONNECTIONSCOUNT, AVAILABLECONNECTSIONCOUNT and APEX$ROW_SYNC_TIMESTAMP will be used to chart the connection pool usage over time.

Application Home Page

Edit the home page and add a Chart instance onto the Content Body. You can first define a chart for |apex|| and then duplicate it for |apex|pu|. These represent the APEX_PUBLIC_USER and ORDS_PUBLIC_USER connection pools respectively.

For the |apex|| chart go to the Attributes section and set it as a Line chart with a 600 second automatic refresh.

Then define a Borrowed series which uses the BORROWEDCONNECTIONSCOUNT column on DATABASE_POOL_CACHE as the value to display. The Order By specifying the Row Sync Timestamp ensures that data is shown in the correct sequence.

Similarly, define a series called Available using AVAILABLECONNECTIONSCOUNT column. Note that the Where Clause restricts the records to just those applicable to the |apex||.

When duplicating this chart for |apex|pu| it is the Where Clause that must change.

Conclusion

You now have an APEX Application with a Home Page that is secured. Data from the DATABASE_POOL_CACHE table is shown in a chart for both pools. That data is obtained from the ORDS Instance API database pool cache endpoint. If additional pools are added to the ORDS instance the information for them will also appear in the table. However, they way this APEX application is configured it will not display any information about such new pools unless you specifically add a chart for it.

Here’s the key point though, no information about the sysadmin user credentials are exposed outside of the workspace.

Now try it out. Define an ORDS Based REST Service and use something like JMeter to call it multiple times concurrently over 20 or 30 minutes. You should see the number of borrowed connections go up and the number of available connections go down. Once the high number of requests ends the available connections increases but then drops down as the unused database connections are closed.

If going through those few APEX Application creation steps is too much you can just import the application using this ORDS_Database_Cache_APEX_App.sql script.