For high availability, scalability and efficient use of computing resources many computing systems are deployed with a load balancer as the client facing interface which distributes the task load.

The product documentation for Oracle REST Data Services does not go into detail on how to put a load balancer in front of your ORDS instances because all of that documentation would be covering configuration specifics for another product. Namely, the load balancer of your choice.

Kris Rice has provided detailed steps on using ORDS with Consul and Fabio load balancer which is an excellent approach which requires very little configuration.

In this article I cover what is perhaps the quickest way to spin up a load balancer in front of your ORDS instances: NGINX with Load Balancing configuration and docker official NGINX image.

In my scenario I have ORDS running in a WebLogic Server on one machine and Tomcat on another. That’s server1:7001 and server2:8888 respectively, This isn’t your typical setup but since the headers returned by both containers are slightly different, it makes it clearer which server handled the request. Both ORDS instances are configured to talk to the same database where HR schema has an AutoREST EMPLOYEES table. That’s accessible at /ords/hr/employees/.

This nginx.conf has the bare minimum to get up and running.

events {}

http {

upstream ords {

server server1:7001;

server server2:8888;

}

server {

location / {

proxy_pass http://ords;

proxy_set_header Host $host;

}

}

}

It will route each request to one of the upstream servers in a round-robin fashion. Note the proxy_set_header directive. This is essential so that whichever ORDS instance receives the request it knows what URL the client submitted to make the request. Having valid absolute Link Relation URLs in the response relies on this.

Assuming your working directory is the same directory where you have this nginx.conf just spin up a docker container:

docker run -p 8080:80 -v ${PWD}/nginx.conf:/etc/nginx/nginx.conf:ro -d nginx

The port mapping is up to you. By default the docker nginx will listen on port 80 and in this case I have it mapped to 8080 on the host machine.

Now a request to http://localhost:8080/ords/hr/employees/ will be routed to the whatever server is next in the round-robin list.

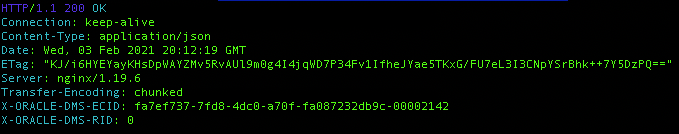

Note that in both cases the ETag header is the same because the same response body is being returned to the client each time for the same URL http://localhost:8080/ords/hr/employees/.

It’s that quick and easy. I’ll leave it to you to discover what happens when one or both ORDS instances are stopped and to refer to NGINX documentation on configuring it for high availability.

The next article in this series HTTPS Load Balance: NGINX & ORDS will build on this NGINX concept and go through the steps of generating a self signed certificate so that HTTPS traffic can be encrypted.

3 thoughts on “Load Balancing ORDS with NGINX”