Have you ever had your application completely crash under load and wondered why something that just worked fine yesterday can drive you mental today?

Have you ever had your application completely crash under load and wondered why something that just worked fine yesterday can drive you mental today?

Most of the time an Object-Relational Mapping package is the right solution for your applications to interact with a database. It does not matter if it is free, commercial or home grown, you will generally find that it reduces the amount of code. Moreover, the application code is better designed and easier to test. Depending on your chosen solution, you may even see performance improvements with caching and database specific optimisations.

However, you may also find substational performance degradations when the data volume increases. It is important to remember that no matter how sophisticated your ORM package is, it may still produce inefficient SQL statements. If you had SQL statements embedded in your code you would review that as part of the code review. So if you are going to hand over a critical operation such as the generation of SQL statements then these need to be reviewed.

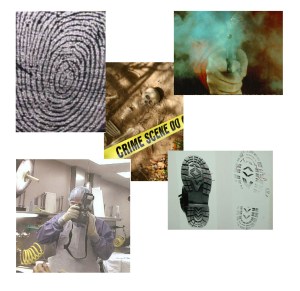

Your application can become a performance crime scene. What can you do to prevent it? Early analysis.

Your application can become a performance crime scene. What can you do to prevent it? Early analysis.

The execution plan, sometimes called query plan or explain plan, for an SQL statement will provide insight into how the database will act on the SQL statement. It is available in most databases and is a useful tool to determine the efficiency of the statement. For example, an execution plan can indicate what indexes would be used. When code is being reviewed, the execution plans for SQL statements should also be reviewed, in much the same way that the running test classes would be reviewed for correctness.

I learned this the hard way when I found a delete operation took too long and eventually timed out in a test environment that had a high volume of records. A review of the code showed a nice, clean object oriented design and a very simple, easy to understand ORM configuration. The application in question was used to manage financial documents. It had the concept of a ChequeBook, one or more Cheques in a ChequeBook, but also that both a ChequeBook and Cheque were a Document. The class diagram in this article illustrates that relationship. The relationship was also reflected in the database with corresponding tables. The ‘type’ attribute was used as a discriminator for the two concrete objects: ChequeBook and Cheque.

The problem arose when attempting to delete a ChequeBook. The ORM could delete the Cheque records using the parentContentId easily enough, but since there was no corresponding column on the Documents table, the ORM then produced this innocuous looking statement.

DELETE FROM DOCUMENTS

WHERE TYPE = ‘CHEQUE’ AND

ROW_ID NOT IN (SELECT ROW_ID FROM CHEQUES)

Appears quite innocent at first, in fact it might even be thought quite a clever attempt to clean up all records on the DOCUMENTS table that should have a corresponding CHEQUES record but doesn’t. In the development database with only a few hundred CHEQUES, this performs ok. Push that up to a few thousand and you soon realise the problem with doing this blanket select statement on CHEQUES even if it does use an index.

In this case I had to code around the ORM with a hand crafted SQL statement. I could have just invoked remove() on each Cheque entity, but for large ChequeBooks this would have produced a lot of SQL statements. Another approach could have been to use a stored procedure but that would not have been easily portable.

The key lesson learned though was to sanity check the ORM before it drives you crazy with it’s unexpected eccentricities. Check your ORM documentation to see how you can get profile details of the SQL statements generated. Go to your database server and get reports on SQL execution plans. The ORM is effectively another developer on your team generating SQL statements. Know and understand what it is producing.